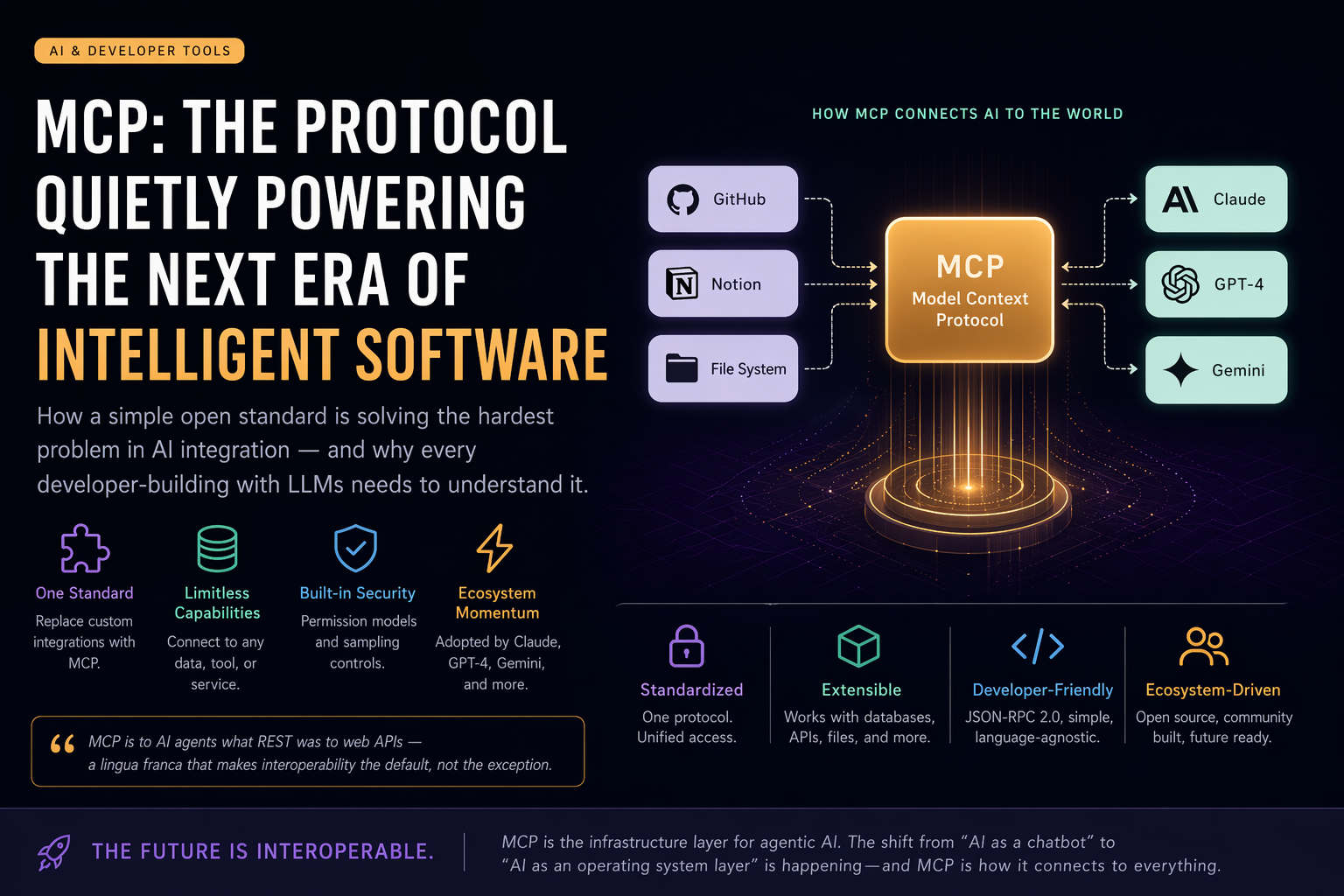

How a simple open standard is solving the hardest problem in AI integration — and why every developer building with LLMs needs to understand it.

How MCP Connects AI to the World

Standardised Protocol

There’s a problem every developer building AI-powered products hits eventually. You have a powerful LLM. You have a mountain of tools — databases, APIs, file systems, SaaS integrations. And connecting them feels like writing a new adapter for every single combination. It doesn’t scale. It doesn’t compose. And it breaks the moment something changes.

That’s the exact problem Model Context Protocol (MCP) was designed to fix — and it’s doing so quietly, but decisively.

What is MCP?

MCP, introduced by Anthropic in late 2024, is an open standard that defines how AI models communicate with external tools and data sources. Think of it as USB-C for AI integrations — instead of every model and every tool building their own proprietary connector, MCP gives you one universal interface that both sides agree to speak.

“MCP is to AI agents what REST was to web APIs — a lingua franca that makes interoperability the default, not the exception.”

Before MCP, if you wanted Claude to read your Notion docs, query a PostgreSQL database, and push updates to GitHub — you’d build three separate, bespoke integrations. Each with its own auth flow, data format, and failure modes. With MCP, you expose those capabilities as a server once, and any MCP-compatible AI model can consume them immediately.

The Architecture in Plain Terms

MCP uses a client-server model built on JSON-RPC 2.0. There are three core concepts:

MCP Server

Exposes tools, resources, and prompts from any data source or API.

MCP Client

The AI model (or its host app) that discovers and calls the server’s capabilities.

Transport Layer

stdio for local tools, SSE/HTTP for remote services — same protocol, different delivery.

A server declares what it can do — “I can read files,” “I can query this database,” “I can send a Slack message” — and exposes a manifest. The AI client picks up that manifest at runtime and knows exactly what actions are available, what inputs they need, and what they return. No hardcoded function signatures. No prompt engineering gymnastics to explain tool capabilities.

// A minimal MCP tool definition (TypeScript)

server.tool("get_github_issues", {

repo: z.string(),

state: z.enum(["open", "closed", "all"]),

}, async ({ repo, state }) => {

const issues = await octokit.issues.listForRepo({ repo, state });

return { content: [{ type: "text", text: JSON.stringify(issues) }] };

});That’s it. One declaration, and any MCP-compatible model can now pull GitHub issues — with proper typing, proper error handling, and zero prompt hacking.

Why This Matters More Than It Looks

The real shift MCP enables isn’t technical — it’s economic. Right now, the AI ecosystem is fragmented. OpenAI has function calling with one schema. Claude has tool use with another. Google has yet another. Every integration you build is partially locked to one vendor’s API design decisions.

MCP breaks that lock. A server built once works with Claude, GPT-4, Gemini, or any open-source model that adopts the spec. This is already happening — VS Code Copilot, Cursor, Zed, and dozens of platforms have added MCP support. The ecosystem is moving fast.

As of early 2025, there are over 1,000 community-built MCP servers spanning everything from browser automation and Postgres queries to Stripe payments and Figma design files.

Real-World Use Cases for Developers

1. Autonomous Coding Agents

Claude Code uses MCP to let the model read your project files, run terminal commands, browse docs, and commit code — all through standardised tool calls. The model doesn’t need special-cased logic for each action. It just calls tools.

2. Enterprise AI Workflows

Imagine an internal AI assistant that can query your CRM, read Confluence docs, check JIRA tickets, and draft emails — without any of those integrations knowing about each other. Each exposes an MCP server. The AI orchestrates them at runtime.

3. Personal Productivity Tools

I built a GitHub Actions job alert system that uses the Claude API to score job listings against my resume. With MCP, this kind of agentic workflow becomes dramatically simpler — your job sources, your email service, and your scoring logic each become an MCP tool, and the model choreographs them naturally.

The Nuances Worth Knowing

MCP isn’t magic. It’s a contract — and like any contract, it only works if both parties honour it. There are real considerations around security (tools can be weaponised via prompt injection), performance (each tool call is a round trip), and trust (should the model be allowed to execute arbitrary shell commands?).

The spec addresses some of this with a permission model and sampling controls. But building production MCP servers still requires thoughtful design around rate limiting, input validation, and capability scoping. Don’t expose more than the model needs.

Where MCP is Heading

The trajectory is clear. MCP is becoming the infrastructure layer of the agentic web. Just as REST became the default for web APIs without anyone mandating it, MCP is winning through pragmatism — it solves a real pain, it’s open, and it’s backed by real adoption.

The next phase is composition: AI agents that spawn sub-agents, each with their own MCP toolsets, coordinating on complex tasks autonomously. We’re already seeing early versions of this in Claude’s multi-step tasks and AutoGen-style frameworks.

If you’re building with AI — whether that’s a side project, a SaaS product, or enterprise tooling — learning MCP now puts you a significant step ahead. The shift from “AI as a chatbox” to “AI as an operating system layer” is happening. MCP is the system call interface.

Found this useful? I write about frontend engineering, AI tooling, and developer productivity. Follow ThinkerCart on Facebook | Telegram for more articles like this.